AI Agents & DSL Console

Chat agents, a DSL REPL, and per-device sound design. Cloud or local inference.

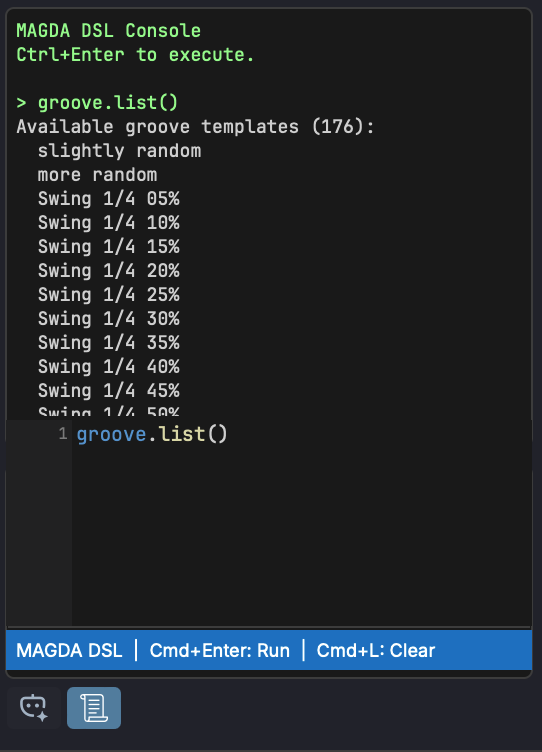

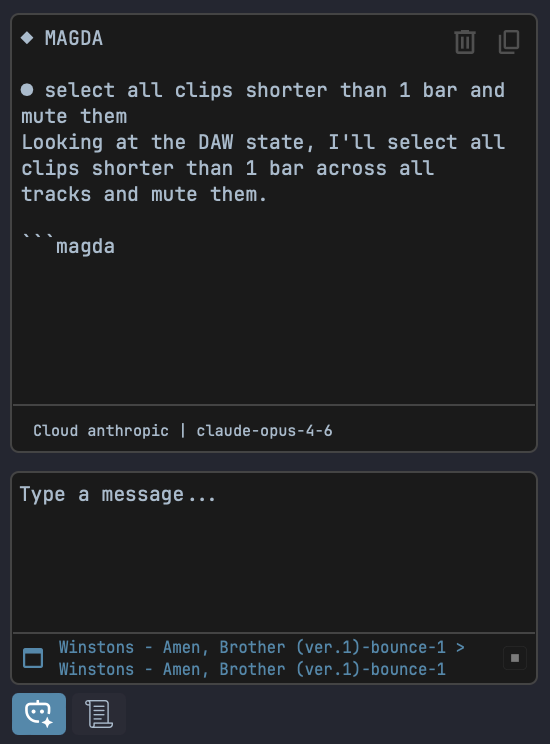

MAGDA ships with AI agents that understand your session and act on it by emitting commands in a built-in domain-specific language. You can use the chat, or type commands directly in the REPL.

New in 0.7: per-device sound-design agents. The 4OSC has its own AI panel docked next to the synth — type a description of the sound, and the model streams back a preset and applies it directly. The preset name and category come along too, so saving into the new preset system is two clicks. More devices to follow.

Cloud providers (OpenAI, Anthropic, Google) are supported, and so is fully local inference via llama.cpp — no API keys required.

Read more: DSL over structured output — when it makes sense and why (Medium)

- Agents operate on real project state, not a preview

- DSL REPL for direct, auditable commands

- Per-device sound-design agents starting with 4OSC (new in 0.7)

- Switch between cloud and local models from settings